AI

The Ultimate Guide to ChatGPT: Features, Updates, and Ethical Impacts in 2025

April 5, 2025 – ChatGPT has transformed the way we interact with artificial intelligence since its launch in 2022. As one of the most advanced AI chatbots, developed by OpenAI, ChatGPT continues to evolve with new features, growing user numbers, and significant ethical debates. This comprehensive guide explores ChatGPT’s history, its latest updates in 2025, practical applications, challenges, and its broader impact on technology and society, providing everything you need to know about this groundbreaking AI tool.

What Is ChatGPT?

A Brief History of ChatGPT

ChatGPT, created by OpenAI, launched in November 2022, quickly becoming a global phenomenon. Built on the GPT (Generative Pre-trained Transformer) architecture, it was designed for natural language understanding, enabling human-like conversations. Within five days, ChatGPT gained 1 million users, a milestone that took Netflix over three years to achieve, as noted in a historical overview. By February 2025, ChatGPT reached 400 million weekly active users, doubling from 200 million in August 2024, and processes over 1 billion queries daily, according to a report on its growth. OpenAI’s revenue also soared, hitting $3.7 billion in 2024 and projecting $11 billion in 2025, per the same TechCrunch report.

How ChatGPT Works

ChatGPT operates on OpenAI’s GPT-4o model as of 2025, an advanced multimodal AI capable of processing text, images, and potentially other data types. It uses machine learning to generate responses based on vast datasets, allowing it to answer questions, write essays, code, and more. Recent integrations, like Anthropic’s Model Context Protocol (MCP), enable ChatGPT to pull data from external sources like business tools, improving response accuracy, as detailed in a summary of updates. Users can interact with ChatGPT via text prompts, with premium features available through ChatGPT Plus ($20/month), Team, and Pro plans.

ChatGPT Features and Updates in 2025

Core Capabilities

ChatGPT excels in various tasks:

- Text Generation: Writing essays, emails, and creative stories.

- Coding Assistance: Generating and debugging code in languages like Python, as noted in a use case study.

- Image Generation: Powered by DALL·E 3, ChatGPT can create images, now including public figures and controversial symbols, a policy shift in 2025, as highlighted in a discussion on policy changes.

- Data Analysis: Running regressions and visualizing metrics, per OpenAI’s release notes.

New Features in 2025

ChatGPT’s 2025 updates enhance its functionality:

- Task Reminders: A beta feature for Plus, Team, and Pro users allows scheduling reminders, such as passport expiration alerts, with push notifications, as detailed in the TechCrunch report.

- Custom Instructions: Users can now assign traits like “Encouraging” or “Gen Z” and nicknames, though some reported options disappearing, suggesting a premature rollout, per TechCrunch.

- Image Generation Policy Shift: ChatGPT can now generate images of public figures and hateful symbols, a change driven by its viral Studio Ghibli-style generator, as noted in the Inkl article. This has raised concerns about misuse, such as creating deepfakes.

- Web Search Without Login: ChatGPT now offers web search capabilities without requiring a login, making it more accessible, per TechCrunch.

Advanced Features for Premium Users

Premium users gain access to advanced models like GPT-4o, which offers improved coding and instruction-following capabilities, as well as features like “Browse with Bing” and the ability to create custom GPTs for specific tasks, per OpenAI’s release notes. The GPT Store, launched in 2024, allows users to monetize their GPTs, further expanding ChatGPT’s ecosystem.

Practical Applications of ChatGPT

Everyday Use Cases

ChatGPT’s versatility makes it a valuable tool for various audiences:

- Students: Assisting with research, essay writing, and math problems, as noted in the use case study.

- Professionals: Automating customer service, drafting emails, and generating reports.

- Developers: Writing and debugging code, with GPT-4o producing cleaner frontend code, per OpenAI’s release notes.

- Creatives: Generating story ideas, designing stickers, and creating AI-powered podcasts through premium features.

Business and Industry Impact

Businesses leverage ChatGPT for efficiency. Platforms like Koo use GPT models to auto-generate blog posts, boosting user engagement, as highlighted in the use case study. OpenAI’s enterprise solutions, like Claude Enterprise, integrate with tools like Google Drive and Slack via MCP, enabling seamless workflows, as noted in a report on MCP adoption. In the U.S., where 37% of adults use AI for work tasks (2024 Pew Research survey), ChatGPT’s applications are particularly impactful in tech hubs like San Francisco and Seattle.

Ethical and Environmental Challenges

Misinformation and Bias

ChatGPT’s ability to “hallucinate”—generating plausible but incorrect answers—remains a concern, as explained in a Wikipedia entry. It can perpetuate biases from its training data, potentially spreading misinformation, especially with relaxed image generation rules, as discussed in a report on AI ethics. OpenAI mitigates this by flagging potentially false outputs, but the risk persists, particularly on divisive topics.

Privacy Concerns

Privacy issues have plagued ChatGPT, with a 2023 bug exposing users’ chat titles, as noted in the Wikipedia entry. OpenAI’s privacy policy allows the company to use data fed into ChatGPT, raising concerns about data security, especially in educational settings, as highlighted in a Brandeis University report. Users are advised to avoid sharing sensitive information, and educators are encouraged to allow opt-outs for students.

Environmental Impact

ChatGPT’s environmental footprint is significant. It consumes 0.3 watt-hours per query and 0.5 liters of water per prompt series for server cooling, per Epoch AI, as reported in a study on AI’s environmental impact. With over 1 billion daily queries, this adds up, prompting calls for more sustainable AI practices.

ChatGPT’s Broader Impact on Technology

Influence on AI Development

ChatGPT has set the standard for conversational AI, influencing competitors like Anthropic’s Claude and Google’s Gemini. Its adoption of MCP, originally developed by Anthropic, reflects a collaborative trend in AI, as noted in the TechCrunch MCP report. OpenAI’s focus on multimodal AI—handling text, images, and potentially video—aligns with industry trends, as seen in Microsoft Copilot’s recent updates and Google Gemini’s iOS widgets.

Cultural and Social Implications

ChatGPT’s cultural impact is evident in its viral features, like the Studio Ghibli-style image generator, which has inspired fan art and memes across platforms like Instagram and TikTok. Its conversational abilities have also sparked debates about AI’s role in education, with some schools banning its use due to cheating concerns, while others integrate it as a learning tool, as discussed in the Brandeis report. Socially, ChatGPT has raised questions about job displacement, with 19% of U.S. workers worried about AI replacing their roles, per a 2024 Gallup poll.

ChatGPT in Entertainment and Media

ChatGPT’s creative applications extend to entertainment. It can generate scripts, storyboards, and even AI-powered podcasts, a premium feature introduced in 2024. Media outlets use ChatGPT to draft articles or summarize news, though concerns about accuracy persist, as noted in the AI ethics report. The tool’s image generation capabilities have also been used in marketing, creating viral campaigns, such as the Studio Ghibli-style art that gained traction in early 2025, per the Inkl article.

ChatGPT vs. Competitors

ChatGPT vs. Claude

Anthropic’s Claude, a direct competitor, focuses on safety and transparency, often outperforming ChatGPT in tasks requiring nuanced reasoning, as noted in a comparison study. However, ChatGPT’s broader user base and multimodal capabilities give it an edge in versatility, especially with recent updates like task reminders and image generation.

ChatGPT vs. Google Gemini

Google’s Gemini, integrated into Android devices, competes with ChatGPT by offering seamless hardware integration, such as lock screen widgets, as seen in Google Gemini’s iOS features. While Gemini excels in search and smart home applications, ChatGPT leads in conversational depth and creative tasks, per OpenAI’s release notes.

Tips for Using ChatGPT Effectively

Best Practices for Users

To maximize ChatGPT’s potential:

- Be Specific with Prompts: Detailed prompts yield better results, e.g., “Write a 500-word essay on climate change for a college audience” vs. “Write about climate change.”

- Verify Outputs: Cross-check factual information, especially for academic or professional use, due to the risk of hallucinations.

- Use Custom Instructions: Tailor ChatGPT’s tone and style to your needs, such as setting it to “Professional” for work tasks.

- Protect Privacy: Avoid sharing personal data, as OpenAI may use inputs for training, per its privacy policy.

Advanced Tips for Premium Users

Premium users can leverage features like custom GPTs to create specialized assistants, such as a “Math Tutor” or “Marketing Strategist.” The GPT Store offers pre-built GPTs, which can be customized further, as noted in OpenAI’s release notes. Additionally, using “Browse with Bing” allows ChatGPT to fetch real-time data, ideal for research or news summaries.

The Future of ChatGPT

Upcoming Features and Innovations

OpenAI continues to innovate, with leaks suggesting future updates like video generation and deeper integration with IoT devices, aligning with trends in smart tech ecosystems. OpenAI’s shift toward enterprise solutions, like Claude Enterprise, indicates a focus on business applications, potentially integrating ChatGPT with tools like Microsoft Teams or Salesforce, as speculated in the TechCrunch MCP report.

Long-Term Implications

ChatGPT’s trajectory points to a future where AI becomes a seamless part of daily life, from education to healthcare. However, its growth raises questions about regulation, with lawmakers in the U.S. and EU debating AI safety laws, as noted in the AI ethics report. Balancing innovation with responsibility will be key to ChatGPT’s long-term success, ensuring it benefits society without exacerbating issues like misinformation or environmental strain.

Conclusion: ChatGPT’s Role in the AI Revolution

ChatGPT has redefined AI interaction, offering unparalleled versatility and accessibility. Its 2025 updates, from task reminders to image generation, cement its position as a leader in conversational AI, while its 400 million weekly users highlight its global reach. Yet, challenges like misinformation, privacy concerns, and environmental impact underscore the need for responsible development. As ChatGPT continues to evolve, it remains a pivotal tool in the AI revolution, shaping how we learn, work, and create. For more on AI trends, explore our guides on Microsoft Copilot updates and AI in smart homes.

AI

OpenAI Launches Image Generation API, Bringing DALL-E Powers to Developers

OpenAI has released its advanced image generation technology as an API, allowing developers to integrate the powerful AI image creation capabilities directly into their applications. This move significantly expands access to the technology previously available primarily through ChatGPT and other OpenAI-controlled interfaces.

The newly released API gives developers programmatic access to the same image generation model that powers ChatGPT’s visual creation tools. Companies can now incorporate sophisticated AI image generation into their own applications without requiring users to interact with OpenAI’s platforms directly.

“We’re making our image generation models available via API, allowing developers to easily integrate image generation into their applications,” OpenAI stated in its announcement. The company emphasized that the API has been designed with both performance and responsibility in mind, implementing safety systems similar to those used in their consumer-facing products.

The image generation API supports a wide range of capabilities, including creating images from text descriptions, editing existing images with text instructions, and generating variations of uploaded images. Developers can specify parameters such as image size, style preferences, and quality levels to customize outputs for their specific use cases.

Major software companies have already begun implementing the technology. Design and creative software leaders like Adobe and Figma are among the first partners to integrate the API into their products, enabling users to generate images directly within their existing workflows rather than switching between multiple applications.

The API operates on a usage-based pricing model, with costs calculated based on factors including image resolution, generation complexity, and volume. Enterprise customers with specialized needs can access custom pricing plans and dedicated support channels, while smaller developers can get started with standard plans.

Security and content moderation remain central to the implementation. OpenAI has incorporated safety mechanisms to prevent the generation of harmful, illegal, or deceptive content. The system includes filters for violent, sexual, and hateful imagery, as well as protections against creating deepfakes of real individuals without proper authorization.

“This represents a significant step in making advanced AI capabilities more accessible to developers of all sizes,” said technology analyst Maria Rodriguez. “Previously, building this level of image generation required massive resources and expertise that most companies simply didn’t have.”

Industry experts note that the API’s release will likely accelerate the integration of AI-generated imagery across a wide range of applications, from e-commerce product visualization to educational tools and creative software. The programmable nature of the API allows for more customized and contextual image generation compared to using standalone tools.

For enterprises looking to incorporate image generation into their products, the API offers advantages including reduced latency, customization options, and the ability to maintain users within their own ecosystems rather than redirecting them to external AI tools.

The release comes amid growing competition in the AI image generation space, with competitors like Midjourney, Stable Diffusion, and Google’s image generation models all vying for developer and enterprise adoption. OpenAI’s strong brand recognition and the widespread familiarity with DALL-E through ChatGPT give it certain advantages, though pricing and performance factors will influence adoption rates.

Developers interested in implementing the image generation API can access documentation and begin integration through OpenAI’s developer portal. The company provides code examples in popular programming languages and comprehensive guides for common use cases to streamline the implementation process.

OpenAI emphasizes that all API users must adhere to their usage policies, which prohibit applications that could cause harm or violate the rights of others. The company maintains the ability to monitor API usage and can suspend access for applications that violate these terms.

As AI-generated imagery becomes increasingly mainstream, ethical considerations around disclosure and transparency continue to evolve. Many platforms require or encourage disclosure when AI-generated images are used commercially, and OpenAI recommends that developers implement similar transparency measures in their applications.

The API release represents OpenAI’s continued strategy of first developing advanced AI capabilities for direct consumer use before making them available as programmable services for the broader developer ecosystem. This approach allows the company to refine its models and safety systems before wider deployment while maintaining some level of oversight regarding how its technology is implemented.

AI

Columbia Student Suspended for AI Cheating Tool Secures $5.3M in Funding

Former Columbia University student Jordan Chong has transformed academic punishment into entrepreneurial opportunity by securing $5.3 million in seed funding for his controversial AI startup. The 21-year-old, who was suspended from the prestigious university for creating an AI interview cheating tool, has now founded Cluely, a company focused on developing AI tools for interview assistance.

“I got kicked out of Columbia for building an AI tool that helped me cheat on class interviews,” Chong stated in recent interviews. Rather than abandoning his project after facing serious academic consequences, the young entrepreneur refined his technology and attracted significant investor interest.

According to TechCrunch, the $5.3 million seed round was led by Founders Fund, with participation from several angel investors who recognized potential in Cluely’s approach to AI-assisted communication. This funding success comes during a challenging period for AI startups, with venture capital investments in the sector showing notable decline in recent months.

Cluely is out. cheat on everything. pic.twitter.com/EsRXQaCfUI

— Roy (@im_roy_lee) April 20, 2025

The Technology Behind Cluely

Cluely’s technology analyzes patterns in interview questions and generates contextually appropriate responses based on an extensive database of successful answers. The system can provide real-time suggestions during interviews, helping users respond more effectively to unexpected questions.

The application initially focused on academic settings but has expanded to cover job interviews and other professional assessments. Users can access Cluely’s suggestions through mobile applications and browser extensions designed to operate discreetly during interview situations.

“Our technology isn’t just about providing answers,” Chong explains. “It’s about augmenting human capabilities in situations where people often struggle to perform their best due to anxiety or limited preparation time.”

Reports from Digital Watch indicate that the tool works by analyzing patterns in interview questions and generating contextually appropriate responses. Users can access these suggestions through various interfaces, enabling what some consider an unfair advantage in assessment situations.

Ethical Concerns and Academic Integrity

The emergence and funding of Cluely has sparked intense debate within educational and professional communities. Academic institutions, including Columbia University, have expressed concerns about tools that potentially undermine the integrity of assessment processes.

“When we evaluate students or job candidates, we’re trying to gauge their actual knowledge and abilities,” explained Dr. Michael Chen, Dean of Student Affairs at a prominent East Coast university. “Tools that artificially enhance performance risk making these assessments meaningless.”

Maeil Business Newspaper reports that many universities are already adapting their interview processes to counter AI-assisted cheating. Some have implemented stricter monitoring protocols, while others are moving toward assessment methods that are more difficult to circumvent with AI assistance.

Educational technology experts suggest that Cluely represents a new frontier in the ongoing balance between assessment integrity and technological advancement. “We’ve dealt with calculators, internet access, and basic AI tools,” noted education technology researcher Dr. Lisa Rodriguez. “But real-time interview assistance takes these challenges to a completely different level.”

Growing Market Despite Controversy

Despite ethical concerns, market analysts predict significant growth in AI-assisted communication tools. The global market for such technologies is projected to reach $15 billion by 2027, according to recent industry reports.

Cluely is positioning itself at the forefront of this emerging sector. The company plans to use its newly secured funding to expand its team, enhance its core technology, and develop new features targeting various interview and assessment scenarios.

“We’re currently focused on interview preparation and assistance,” Chong stated, “but our vision extends to supporting all forms of high-stakes communication, from negotiation to public speaking and beyond.”

FirstPost highlights that while the company markets its product as an “AI communication assistant,” many educators view it as explicitly designed for cheating. This perception stems from Chong’s own admission about the tool’s origins and its “cheat on everything” tagline that has appeared in some marketing materials.

Regulatory Landscape and Future Challenges

As AI communication tools like Cluely gain traction, they face an evolving regulatory landscape. Several states are considering legislation that would require disclosure when AI assistance is used in academic or professional settings.

“We anticipate increased regulatory attention as our technology becomes more widespread,” acknowledged Chong. “We’re committed to working with regulators to find the right balance between innovation and protecting the integrity of assessment systems.”

Legal experts suggest that the coming years will see significant development in how AI-assisted communication tools are regulated, particularly in educational and employment contexts. Some predict requirements for disclosure when such tools are used, while others anticipate technical countermeasures to detect AI assistance.

Adapting Assessment Methods for the AI Era

The rise of tools like Cluely is forcing educational institutions and employers to reconsider traditional assessment methods. Many are already shifting toward evaluation approaches that are more difficult to game with AI assistance.

“We’re seeing increased interest in project-based assessments, collaborative problem-solving exercises, and demonstrations of skills in controlled environments,” explained Dr. Jennifer Wise, an expert in educational assessment. “The goal is to evaluate capabilities in ways that AI can’t easily enhance.”

Some forward-thinking organizations have embraced AI as part of the assessment process, explicitly allowing candidates to use AI tools while focusing evaluation on how effectively they leverage these resources.

The Future of Human-AI Collaboration

Beyond the immediate context of interviews and assessments, Cluely represents a broader trend toward AI-augmented human performance. This trend raises fundamental questions about how we define and value human capabilities in an era of increasingly sophisticated AI assistance.

For Chong and Cluely, these philosophical questions take a back seat to the immediate business opportunity. With $5.3 million in fresh funding, the company is poised for rapid growth despite its controversial origins.

As TechCrunch notes, Cluely’s success highlights the complex relationship between academic integrity and technological innovation. While educational institutions grapple with how to maintain assessment validity, entrepreneurs like Chong are capitalizing on the demand for tools that enhance human performance—regardless of the ethical implications.

AI

Instagram’s AI Teen Detection: The 2025 Surprise You’ll Want to See!

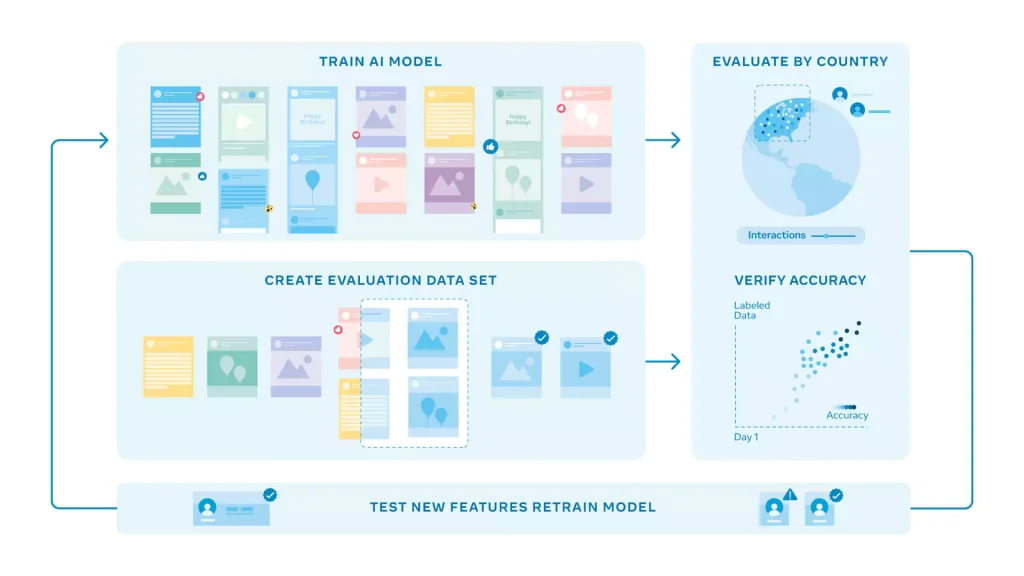

Instagram has rolled out a groundbreaking use of artificial intelligence (AI) to detect and protect teen users, a move announced in 2025 that’s catching global attention. This “adult classifier” system, developed by Meta, uses AI to identify users under 18 based on profile data, interactions, and even birthday posts, automatically enforcing stricter safety settings. Launched as part of a broader effort to safeguard younger audiences, it promises 98% accuracy in age estimation, but it’s sparking debates about privacy and parental control.

This isn’t just a tech update—it’s a worldwide story with implications for social media safety. With AI flagging teens, how will it balance protection and personal freedom? Let’s dive into the details.

The initiative addresses growing concerns about teen safety on social media, building on Meta’s prior investments in AI technology. The “adult classifier” analyzes signals like follower lists, content engagement, and friend messages—such as “happy 16th birthday”—to estimate age, as noted in a tech overview by Meta. This system, first tested in 2022, now enforces settings like private accounts and restricted messaging for detected teens, responding to pressure from parents and regulators worldwide.

The AI operates by processing vast datasets from user activity. It cross-references profile details with behavioral patterns, achieving a 98% accuracy rate in distinguishing users under 25, according to a detailed report on age detection. When it identifies a teen, Instagram applies safeguards: accounts default to private, adults can’t message them unless connected, and content filters block harmful material. This real-time adjustment aims to protect millions of users, but the scale of data involved raises questions.

Experts highlight the benefits. The 98% accuracy could shield teens from predators and mental health risks, a concern backed by an AP investigation into teen safety. For parents, it offers peace of mind, with options to monitor settings. Businesses might see safer platforms boost user trust, potentially increasing ad revenue. Yet, the system isn’t perfect—errors could misclassify adults as teens, limiting their access.

Risks are significant. AI relies on data, and biases in training sets—perhaps from uneven global representation—could lead to mistakes. Privacy advocates worry about the collection of personal data, including video selfies for verification, a method detailed in a Fast Company article on AI testing. Users can appeal, but the lack of transparency on data use is a gap. Cultural differences, like varying age norms, might also confuse the algorithm.

Public reaction adds a layer. On X, teens express frustration over lost control, with one posting, “AI deciding my account settings feels invasive.” Parents, however, praise the safety boost, calling it “long overdue.” This split, missing from official statements, could shape future tweaks, perhaps leading to opt-in options or clearer policies.

Long-term impact is another gap. Will AI detection hold up over years as teen behavior evolves? How will it handle new threats, like deepfakes or cyberbullying spikes? Meta plans a year-long review, but these questions linger. The system’s success could influence other platforms, like TikTok, to adopt similar tools.

Photo: Meta

As Instagram tests this AI teen detection, the world watches. Will it redefine social media safety or raise new privacy challenges? Early feedback will guide its future.

Share your views below. For more updates, visit briskfeeds.com.

-

AI3 months ago

AI3 months agoDeepSeek AI Faces U.S. Government Ban Over National Security Concerns

-

Technology2 months ago

Technology2 months agoCOVID-Like Bat Virus Found in China Raises Fears of Future Pandemics

-

AI2 months ago

AI2 months agoGoogle Gemini Now Available on iPhone Lock Screens – A Game Changer for AI Assistants

-

Technology2 months ago

Technology2 months agoPokémon Day 2025 Celebrations Set for February 27 With Special Pokémon Presents Livestream

-

Technology2 months ago

Technology2 months agoBybit Suffers Record-Breaking $1.5 Billion Crypto Hack, Shaking Industry Confidence

-

Technology2 months ago

Technology2 months agoiPhone 17 Air and Pro Mockups Hint at Ultra-Thin Future, Per Leaked Apple Docs

-

Technology2 months ago

Technology2 months agoApple Unveils New iPad Air with M3 Chip and Enhanced Magic Keyboard

-

Technology2 months ago

Technology2 months agoMysterious Illness in Congo Claims Over 50 Lives Amid Growing Health Concerns