Cybersecurity

New Phishing Attack Uses Blob URLs to Steal Passwords

Cybercriminals have developed a sophisticated phishing technique that leverages Blob URLs to create fake login pages within users’ browsers, stealing passwords and even encrypted messages, according to a Hackread report. This method, uncovered by Cofense Intelligence, bypasses traditional email security systems by generating malicious content locally, making it nearly undetectable. As phishing attacks grow more advanced, this new tactic highlights the urgent need for updated defenses and user awareness to protect sensitive data.

The attack begins with a phishing email that appears legitimate, often redirecting users through trusted platforms like Microsoft’s OneDrive before leading them to a fake login page. Unlike typical phishing sites hosted on external servers, these fake pages are created using Blob URLs—temporary local content generated within the user’s browser. TechRadar explains that because Blob URLs are not hosted on the public internet, security systems that scan emails for malicious links cannot easily detect them. The result is a convincing login page that captures credentials, such as passwords for tax accounts or encrypted messages, as detailed by Forbes. This stealthy approach mirrors trends in AI-driven cyber threats, where attackers exploit technology to evade detection.

Cofense Intelligence, as reported by Security Boulevard, first detected this technique in mid-2022, but its use has surged recently. The phishing campaigns often lure users with prompts to log in to view encrypted messages, access tax accounts, or review financial alerts, exploiting trust in familiar brands. Cybersecurity News highlights that Blob URLs start with “blob:http://” or “blob:https://”, a detail users can check to identify potential threats. However, the complexity of these attacks makes them hard to spot, especially since AI-based security tools are still learning to differentiate between legitimate and malicious Blob URLs, a challenge also seen in AI privacy debates about evolving tech risks.

Protecting against this threat requires a multi-layered approach. Experts recommend avoiding clicking on links in unsolicited emails, especially those prompting logins, and verifying URLs directly with trusted sources. Using two-factor authentication (2FA) can add an extra layer of security, even if credentials are stolen. Organizations should also invest in advanced email security solutions that can detect unusual redirect patterns, as traditional Secure Email Gateways (SEGs) often fail to catch these attacks, per Security Boulevard. These protective measures align with strategies in AI communication tools, which aim to secure user interactions in digital spaces.

The broader implications of Blob URL phishing are significant. As remote work and digital transactions increase, the risk of credential theft grows, potentially leading to financial fraud or data breaches. The digital divide further complicates the issue, as not all users have the tools or knowledge to recognize such threats, a concern echoed in AI accessibility efforts. Additionally, the misuse of legitimate technologies like Blob URLs—commonly used by services like YouTube for temporary video storage—underscores the need for better regulation, a topic often discussed in AI language tool discussions about ethical tech deployment.

This new phishing tactic serves as a wake-up call for both users and security providers. As cybercriminals continue to innovate, staying ahead requires constant vigilance, improved technology, and widespread education on digital safety. The rise of Blob URL attacks highlights the evolving nature of cyber threats and the importance of proactive defense strategies. What do you think about this sneaky phishing method—how can we better protect ourselves online? Share your thoughts in the comments—we’d love to hear your perspective on this growing cybersecurity challenge.

Cybersecurity

Billie Eilish AI Fakes Flood Internet: Singer Slams “Sickening” Doctored Met Gala Photos

Billie Eilish AI fakes are the latest example of deepfake technology running rampant, as the Grammy-winning singer has publicly debunked viral images claiming to show her at the 2025 Met Gala. Eilish, who confirmed she did not attend the star-studded event, called the AI-generated pictures “sickening,” highlighting the growing crisis of celebrity image misuse and online misinformation in the USA and beyond.

LOS ANGELES, USA – The internet was abuzz with photos seemingly showing Billie Eilish at the 2025 Met Gala, but the singer herself has forcefully shut down the rumors, revealing the Billie Eilish AI fakes were entirely fabricated. In a recent social media statement, Eilish confirmed she was nowhere near the iconic fashion event and slammed the AI-generated images as “sickening.” This incident throws a harsh spotlight on the rapidly escalating problem of deepfake technology and the unauthorized use of celebrity likenesses, a concern increasingly impacting public figures and stirring debate across the United States.

The fake images, which depicted Eilish in various elaborate outfits supposedly on the Met Gala red carpet, quickly went viral across platforms like X (formerly Twitter) and Instagram. Many fans initially believed them to be real, underscoring the sophisticated nature of current AI image generation tools. However, Eilish took to her own channels to set the record straight. “Those are FAKE, that’s AI,” she reportedly wrote, expressing her disgust at the digitally manipulated pictures. “It’s sickening to me how easily people are fooled.” Her frustration highlights a growing unease about how AI can distort reality, a problem also seen with other AI systems, such as Elon Musk’s Grok AI spreading misinformation.

Billie Eilish reacts to people trashing her Met Gala outfit this year, which was AI-generated as she wasn’t there:

“Seeing people talk about what I wore to this year’s Met Gala being trash… I wasn’t there. That’s AI. I had a show in Europe that night… let me be!” pic.twitter.com/z9Rj4QEAKQ

— Pop Base (@PopBase) May 15, 2025

This latest instance of Billie Eilish AI fakes is far from an isolated event. The proliferation of deepfake technology, which uses artificial intelligence to create realistic but fabricated images and videos, has become a major concern. Celebrities are frequent targets, with their images often used without consent in various contexts, from harmless parodies to malicious hoaxes and even non-consensual pornography. The ease with which these fakes can be created and disseminated poses a significant threat to personal reputation and public trust. The entertainment industry is grappling with AI on multiple fronts, including stars urging for copyright protection against AI.

The “Sickening” Reality of AI-Generated Content

Eilish’s strong condemnation of the Billie Eilish AI fakes reflects a broader sentiment among artists and public figures who feel increasingly vulnerable to digital manipulation. The incident raises critical questions about:

- Consent and Likeness: The unauthorized use of a person’s image, even if AI-generated, infringes on their rights and control over their own persona.

- The Spread of Misinformation: When AI fakes are believable enough to dupe the public, they become potent tools for spreading false narratives.

- The Difficulty in Detection: As AI technology advances, telling real from fake becomes increasingly challenging for the average internet user. This is a concern that even tech giants are trying to address, with OpenAI recently committing to more transparency about AI model errors.

The Met Gala, known for its high fashion and celebrity attendance, is a prime target for such fabrications due to the intense public interest and the visual nature of the event. The Billie Eilish AI fakes serve as a stark reminder that even high-profile events are not immune to this form of digital deception. The potential for AI to be misused is a widespread concern, touching various aspects of life, including the use of AI by police forces.

Legal and ethical frameworks are struggling to keep pace with the rapid advancements in AI. While some jurisdictions are beginning to explore legislation to combat malicious deepfakes, the global and often anonymous nature of the internet makes enforcement difficult. For victims like Billie Eilish, speaking out is one of the few recourses available to debunk the fakes and raise awareness. As AI becomes more integrated into content creation, the lines between authentic and synthetic media will continue to blur, making critical thinking and media literacy more important than ever for consumers. The public’s desire for authenticity is also pushing for clearer identification, like the calls for AI chatbots to disclose their non-human status.

Cybersecurity

Alleged 89 Million Steam 2FA Codes Leaked, Twilio Denies Breach

On May 14, 2025, an alleged database containing 89 million Steam 2FA (two-factor authentication) codes surfaced online, prompting immediate attention. This incident, which has not been verified by official sources, marks a significant event in the realm of digital security.

The alleged leak claimed to include sensitive details such as Steam account names, email addresses, and 2FA codes. These codes, crucial for securing user accounts, were said to be part of a database advertised on a hacking forum for $5,000. The data reportedly contained historic SMS text messages with one-time passcodes, including recipient phone numbers, confirmation codes for account access, and metadata like timestamps and delivery statuses. If authentic, this information could expose users to phishing attacks and session hijacking, where hackers might intercept or replay 2FA codes to bypass login protections.

Following the emergence of these claims, Twilio, a communications platform reportedly involved, denied any breach. The company stated that it found no evidence of a breach on its systems, dismissing the notion that the data originated from their platforms. This denial is significant, as Twilio provides authentication services for many platforms, including Steam. The incident, if verified, could have far-reaching consequences, prompting a reevaluation of how cybersecurity measures are implemented.

As of now, Steam, operated by Valve Corporation, has not yet commented on the alleged breach. This lack of response left users uncertain about the safety of their accounts, amplifying concerns about personal information and account security. The incident highlights the broader challenge of maintaining user trust in an era where digital threats are increasingly sophisticated, especially as AI-driven public safety tools continue to evolve.

The ongoing focus is on verifying the leak’s authenticity and understanding its implications. This event serves as a stark reminder of the importance of robust cybersecurity in the age of AI, prompting a reevaluation of how these technologies are deployed. What are your thoughts on the alleged Steam 2FA code leak and Twilio’s denial—does it signal a broader issue with online security, or is it an isolated incident? Share your insights in the comments; we’re eager to hear your perspective on this developing story.

Cybersecurity

Fake AI Video Platforms Spread Noodlophile Stealer Malware via Facebook Ads

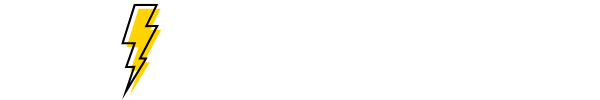

Cybercriminals are exploiting the popularity of AI technology by using fake AI video generation platforms to distribute a new malware called Noodlophile Stealer, according to a Morphisec report. Promoted through deceptive Facebook ads, these fraudulent sites trick users into downloading malicious software that steals sensitive data, such as browser credentials and cryptocurrency wallets. As AI tools gain mainstream traction, this emerging threat highlights the dark side of innovation, raising urgent concerns about online safety and the need for stronger cybersecurity measures.

The Noodlophile Stealer campaign operates with a sophisticated multi-stage attack. Morphisec’s analysis reveals that attackers lure users through Facebook groups with promises of instant AI-generated videos. Victims are prompted to upload images to the fake site, expecting a custom video in return. Instead, they download a malicious ZIP file disguised as a legitimate tool, often posing as a version of CapCut, a popular video editing software. Once executed, the file deploys Noodlophile Stealer, which harvests browser credentials, cryptocurrency wallets, and other sensitive data, and in some cases, installs the XWorm loader for remote access. This tactic echoes other AI-driven cyber threats, where malicious actors exploit trust in technology to target unsuspecting users.

Photo: Morphisec

The scale of the threat is alarming. Noodlophile Stealer, previously undocumented in public malware trackers, has been found to target a wide range of data, including cookies, passwords, and credit card information from browsers like Google Chrome, Microsoft Edge, and Mozilla Firefox. The use of Facebook ads amplifies the campaign’s reach, with social media platforms inadvertently serving as a distribution network for these fake tools. This mirrors broader trends in AI privacy debates, where the misuse of technology poses significant risks to user security.

The fake platforms often appear legitimate, complete with professional designs and cookie banners to build trust. However, their true intent becomes clear once the malicious payload is downloaded. Cybersecurity News reported that some sites, such as editproai[dot]pro, trick users into installing malware under the guise of an AI-based editing tool called “EditPro.” This deceptive strategy preys on the growing enthusiasm for AI content creation, a trend that has also fueled the development of AI communication tools aimed at enhancing user experiences. Unfortunately, it also creates opportunities for cybercriminals to exploit unsuspecting victims, particularly those unfamiliar with such risks.

Protecting against Noodlophile Stealer requires vigilance and robust cybersecurity measures. Experts recommend avoiding downloads from unverified sources, especially those promoted through social media ads, and using reputable antivirus software to detect and block malicious files. Morphisec advises investing in tools with dynamic detection and behavioral rules, such as Palo Alto Networks’ Cortex XDR, to combat evolving threats. However, the digital divide poses a challenge, as not all users have access to such tools or the knowledge to identify scams, a problem also seen in AI accessibility efforts. This underscores the need for broader education on digital safety, similar to initiatives in AI language tool discussions that aim to empower diverse users.

The rise of Noodlophile Stealer highlights the broader implications of AI’s rapid adoption. While AI offers transformative potential for content creation, it also provides cybercriminals with new avenues to exploit vulnerabilities. Social media platforms like Facebook must strengthen their ad vetting processes to prevent the spread of such campaigns, and users must remain cautious when engaging with unfamiliar AI tools. As AI continues to evolve, balancing innovation with security will be critical, much like how AI-driven public safety tools are working to protect communities while addressing ethical concerns. What do you think about the risks of fake AI platforms—how can users stay safe online? Share your thoughts in the comments—we’d love to hear your perspective on this growing threat.

-

AI3 months ago

AI3 months agoDeepSeek AI Faces U.S. Government Ban Over National Security Concerns

-

Technology2 months ago

Technology2 months agoiPhone 17 Air and Pro Mockups Hint at Ultra-Thin Future, Per Leaked Apple Docs

-

AI2 months ago

AI2 months agoGoogle Gemini Now Available on iPhone Lock Screens – A Game Changer for AI Assistants

-

Technology3 months ago

Technology3 months agoBybit Suffers Record-Breaking $1.5 Billion Crypto Hack, Shaking Industry Confidence

-

Technology3 months ago

Technology3 months agoPokémon Day 2025 Celebrations Set for February 27 With Special Pokémon Presents Livestream

-

AI2 months ago

AI2 months agoOpera Introduces AI-Powered Agentic Browsing – A New Era for Web Navigation

-

Technology2 months ago

Technology2 months agoApple Unveils New iPad Air with M3 Chip and Enhanced Magic Keyboard

-

AI2 months ago

AI2 months agoChina’s Manus AI Challenges OpenAI with Advanced Capabilities